Use REST when building simple CRUD APIs, public endpoints, or where HTTP caching matters. Use GraphQL when your frontend needs flexible data shapes, you are aggregating multiple services, or building a backend-for-frontend layer. The debate has been running since 2015 and neither wins everywhere — both win in their right domain. The engineering mistake is applying either universally rather than matching the API style to the consumer's actual requirements. In 2026, a third contender has appeared for AI-era tooling APIs: MCP.

| Criteria | REST | GraphQL | Winner |

|---|---|---|---|

| Caching | Native HTTP caching, CDN-friendly | Requires custom caching layer | REST |

| Payload Flexibility | Fixed endpoints, over/under-fetching | Client specifies exact fields | GraphQL |

| Learning Curve | Low — standard HTTP conventions | Higher — schema, resolvers, tooling | REST |

| Multi-Source Aggregation | Multiple round-trips or BFF | Single query across sources | GraphQL |

| Monitoring & Debugging | Standard HTTP status codes | All 200s, custom error handling | REST |

| Real-Time Support | Webhooks, SSE | Subscriptions (built-in) | GraphQL |

| AI/LLM Integration | Direct tool calling via MCP | Possible but adds complexity | REST + MCP |

What has changed since the last round of this conversation: GraphQL's enterprise adoption accelerated dramatically, the N+1 problem got better tooling, REST found renewed relevance in public APIs and microservice communication, and a third contender — MCP (Model Context Protocol) — entered the picture for AI-era tooling APIs.

Where REST Wins

REST wins for public APIs. The reason is caching. REST's URL-per-resource model aligns perfectly with HTTP caching semantics — CDNs, browser caches, and proxy caches all understand GET /products/123 and can cache the response. A GraphQL POST to /graphql with a query in the body cannot be cached by most HTTP infrastructure without additional tooling.

REST wins for inter-service communication in microservices. Service-to-service calls typically have well-defined, narrow contracts — "give me this order" not "give me these 14 fields from this order plus these 7 fields from the related user." The flexibility GraphQL provides is valuable at the client layer; it is unnecessary overhead between services where both sides of the contract are owned by the same organisation.

REST wins when you need a simple, learnable API for third-party developers. A REST API with good OpenAPI documentation is easier to understand and integrate against than a GraphQL API with an equivalent schema for developers who are not GraphQL-familiar. The integration friction reduction matters for developer adoption.

Where GraphQL Wins

GraphQL wins for the Backend for Frontend (BFF) pattern. A mobile app and a web app often need different data shapes from the same underlying data. With REST, you either build two different endpoints, build one endpoint that returns the superset, or accept over-fetching. With GraphQL, each client requests exactly what it needs from one endpoint. The BFF use case is where GraphQL delivers its clearest ROI.

GraphQL wins for highly relational data where clients have varying traversal needs. A social platform where some queries need "user → followers → their recent posts → comments" and others need just "user → basic profile" is a natural fit. The graph model reflects the actual shape of the data.

GraphQL wins for developer experience in products where the front-end team iterates faster than the back-end team. Frontend developers can ask for exactly the fields they need without waiting for a new API endpoint to be deployed. The iteration speed advantage is real and compounds over a product's lifetime.

“Use REST for public APIs and service-to-service calls. Use GraphQL for client-facing BFF layers where clients have varying data needs. Use both — they are not mutually exclusive.”

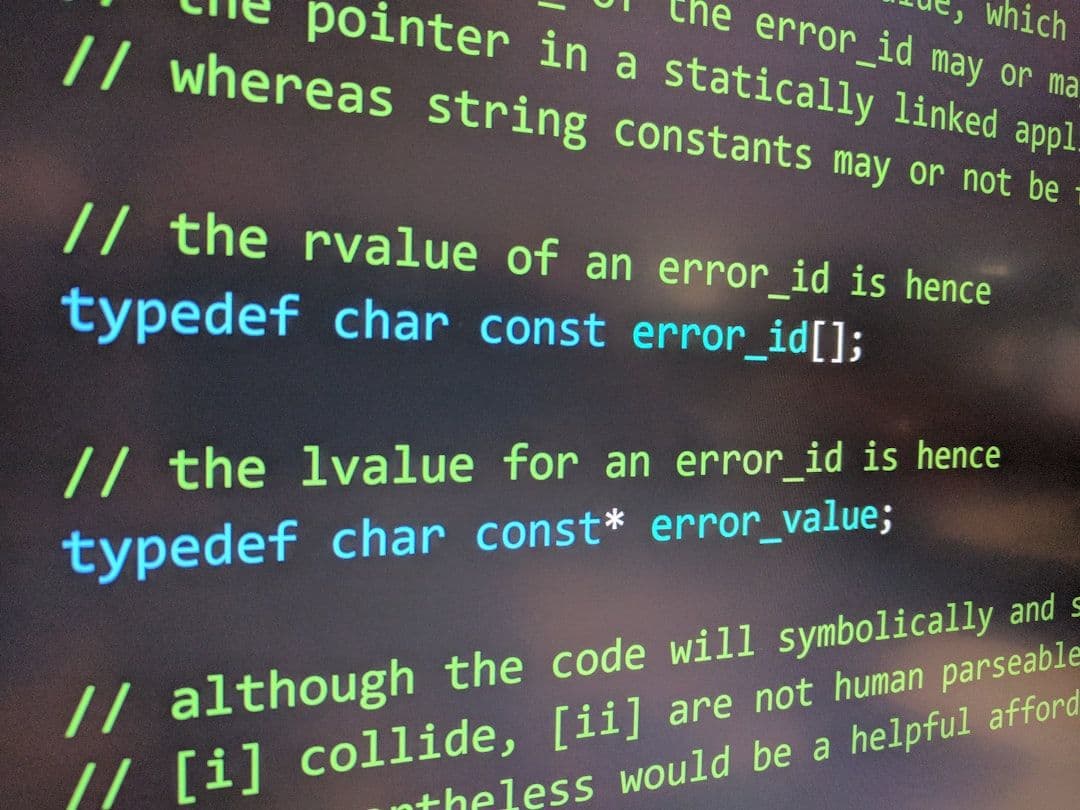

The N+1 Problem and DataLoader

The N+1 problem is the most commonly cited GraphQL performance issue: resolving a list of N items where each item triggers an additional database query produces N+1 total queries. A query for 100 users that loads each user's profile separately makes 101 database round trips instead of 2.

DataLoader (originally from Facebook) solves this with request batching and caching. Instead of fetching each item immediately, DataLoader collects all pending fetches during the current event loop tick and executes them as a single batched query. Properly implemented DataLoader eliminates N+1 without changing the resolver code that triggers it.

Persisted Queries for Production

Ad-hoc GraphQL queries (arbitrary query strings from clients) create two production problems: security (clients can send arbitrarily expensive queries) and performance (the server cannot cache query parsing/validation results). Persisted queries solve both.

A persisted query is a pre-registered query identified by a hash. The client sends the hash instead of the full query string. The server looks up the pre-approved query by hash and executes it. Arbitrary query strings are rejected. This eliminates query complexity attacks and enables operation-level caching.

Apollo's persisted query mechanism and Relay's query ID system both implement this pattern. For production GraphQL APIs, persisted queries are not optional if you care about security and performance.

tRPC and the Full-Stack TypeScript Option

For teams building TypeScript full-stack applications, tRPC deserves mention as a third option. tRPC provides end-to-end type safety between a TypeScript backend and TypeScript frontend without a code generation step. You define procedures on the server; the client calls them with full type inference. No schema language, no build step, no SDL.

tRPC is narrower in scope than GraphQL — it is not a general API layer, it is a type-safe RPC mechanism for TypeScript monorepos. If your frontend and backend are in the same repo and both TypeScript, tRPC can give you better developer experience than GraphQL with less ceremony. If you need a public API or non-TypeScript clients, tRPC does not apply.

MCP: The Third Contender for AI-Era APIs

Anthropic's Model Context Protocol (MCP) is emerging as the standard interface for AI agent tooling. MCP defines a standard way for AI agents to discover and call tools — think of it as a REST API for AI agents, with a discovery layer that enables zero-configuration tool use.

For teams building products that AI agents will consume — whether your own agents or third-party agent frameworks — MCP is the interface to publish. The major agent frameworks (Claude, GPT-4, AutoGen, LangChain) either already support MCP or are adding it. A team that exposes their product's functionality as an MCP server is positioning for a world where AI agents are a primary consumer of APIs alongside human-operated clients.

| Protocol | Best consumer | Key strength | Key weakness | 2026 momentum |

|---|---|---|---|---|

| REST | Public APIs, services, third-party devs | HTTP caching, simplicity, ubiquity | Over/under-fetching for complex clients | Stable, core infrastructure |

| GraphQL | BFF, client-driven data needs | Flexible queries, type system, single endpoint | N+1 without DataLoader, caching complexity | Strong in enterprise |

| tRPC | TypeScript full-stack, internal RPCs | Zero-codegen type safety | TypeScript only, no public API use | Growing in TS ecosystem |

| MCP | AI agents, LLM tool calling | AI-native discovery, zero-config for agents | Immature ecosystem, limited auth patterns | Rapidly growing |

The Practical Decision

The question is not "which is better." The question is "who is consuming this API and what are their access patterns?" Public API for developers: REST with OpenAPI docs. Client-facing layer for a complex product with a TypeScript frontend: GraphQL or tRPC. Internal service-to-service: REST or gRPC. AI agents as primary consumers: MCP.

- Public API with third-party developers: REST + OpenAPI

- BFF for mobile + web with different data needs: GraphQL

- TypeScript full-stack product, same repo, no external consumers: tRPC

- Inter-service communication, performance-critical: gRPC

- AI agent tooling, LLM integration: MCP

- All of the above can coexist — the mistake is picking one for everything

Frequently Asked Questions

GraphQL vs REST: which is better in 2026?

Neither is universally better. REST wins for public APIs, simple CRUD, and where HTTP caching is critical. GraphQL wins for BFF layers aggregating multiple services, flexible client data needs, and complex graph traversals. Most production systems use both.

When should I use GraphQL instead of REST in 2026?

Use GraphQL when building a BFF (Backend For Frontend) aggregating multiple services, when mobile and web clients need different data subsets, or when you have complex entity relationships with N+1 query problems. Avoid GraphQL for simple CRUD or public APIs.

Is GraphQL dying in 2026?

No, but adoption has plateaued. GraphQL is consolidating into its ideal use case: internal API aggregation layers and developer tooling. REST remains dominant for public APIs. The hype cycle has ended; usage is now driven by genuine fit.

How does MCP compare to GraphQL and REST?

MCP (Model Context Protocol) is not a general-purpose API protocol — it is specifically designed for exposing tools and context to LLMs. It complements REST and GraphQL rather than replacing them. REST serves humans, MCP serves AI models.

What are the main operational challenges of running GraphQL in production?

GraphQL introduces query complexity attacks (deep nested queries can overload resolvers), makes HTTP caching harder since queries go via POST, requires field deprecation management, and needs query depth limiting and persisted queries for production safety. These operational concerns are why many teams stick to REST for public APIs.