Efficiency at Scale: NVIDIA, Energy Leaders Accelerating Power‑Flexible AI Factories to Fortify the Grid

What Happened

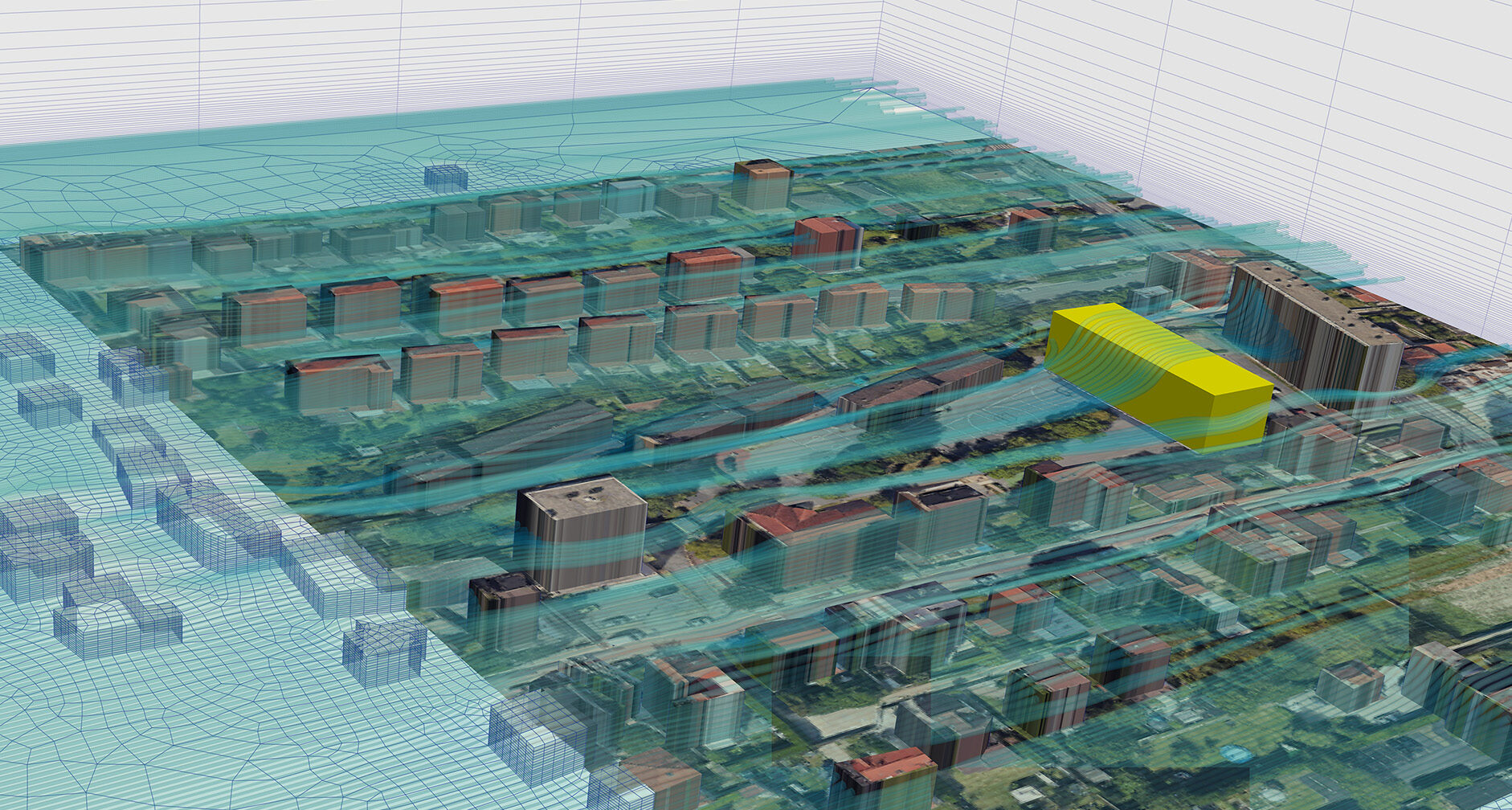

CERAWeek — dubbed the Davos of energy — is where policymakers, producers, technologists and financiers gather to discuss how the world powers itself next. NVIDIA and Emerald AI unveiled at the conference last week a new way forward — treating AI factories not as static power loads but as flexible,

Our Take

NVIDIA and Emerald AI at CERAWeek framed AI data centers as grid-flexible loads — not fixed draws. The model: clusters shed or absorb power on demand, turning compute into a grid stabilization tool.

For teams running batch jobs — H100 fine-tuning, large embedding pipelines — off-peak grid pricing becomes a real scheduling variable. Most engineers treat job scheduling as a queue-depth problem only. Cloud providers are starting to pass grid incentives through, and that math changes.

Multi-GPU training teams on AWS or Azure should watch whether spot pricing starts correlating with grid demand signals. Real-time inference teams can ignore this entirely.

What To Do

Schedule batch embedding runs during off-peak grid windows instead of by queue depth alone because H100 spot pricing on AWS is beginning to reflect grid demand signals.

Builder's Brief

What Skeptics Say

Conference-stage energy partnerships are PR before they are engineering; NVIDIA's per-rack power draw continues rising with each GPU generation and no announced initiative has reversed that trend. 'Power-flexible' is undefined enough to mean almost nothing.

Cited By

React

Get the weekly AI digest

The stories that matter, with a builder's perspective. Every Thursday.